Michael Douma

A few minutes on…

What I’d propose for CIF.org.

Generative Engine Optimization.

Me and AI-powered new things.

March 2026

What Changes I Would Propose for CIF.org

I reverse-engineered 9 user personas for CIF.org

AI analysis of the site’s content architecture, tone, jargon, and navigation pathways revealed every distinct audience — from bond investors to Indigenous community leaders.

What users like

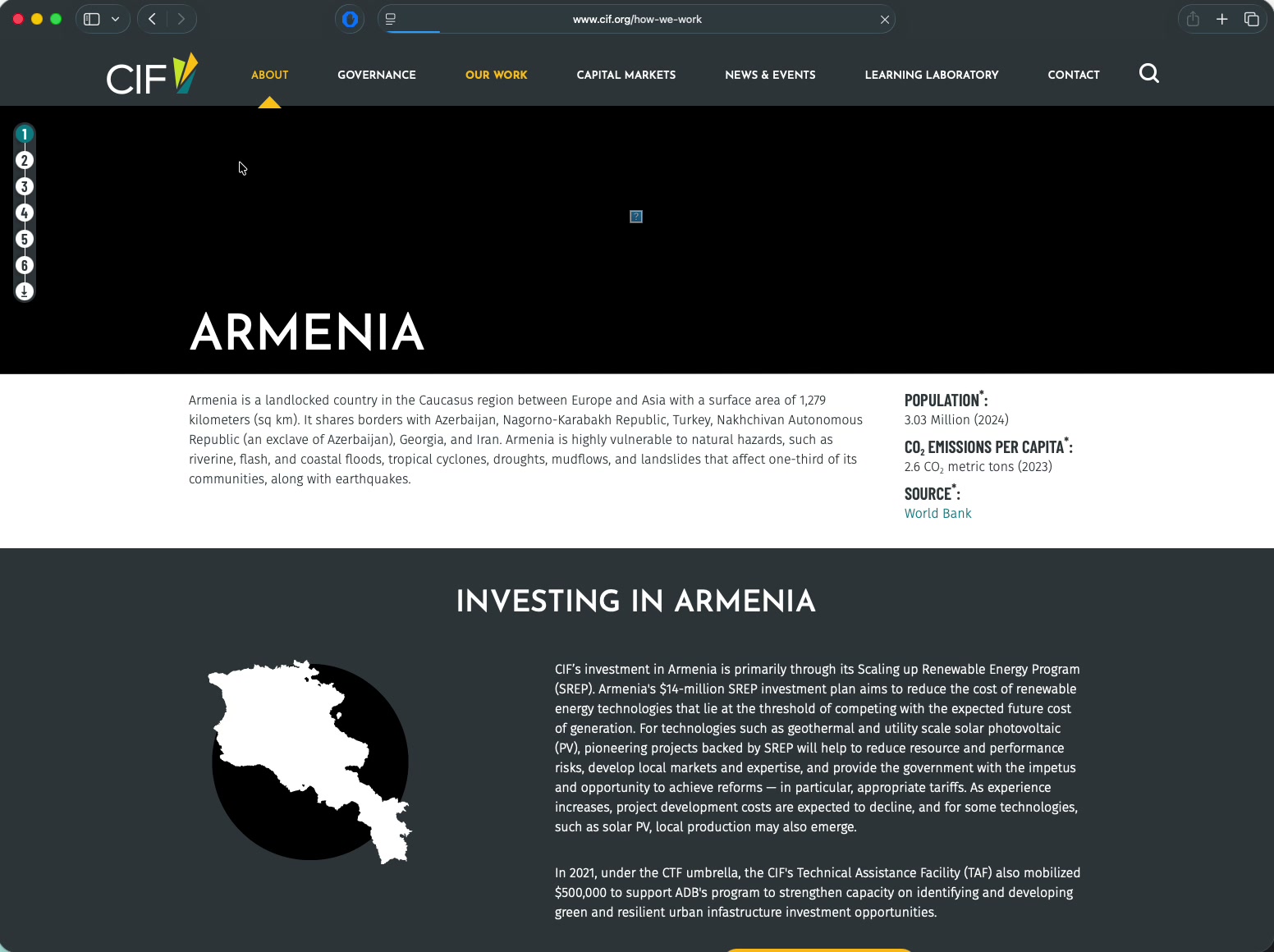

73 Country Dashboards

- Most-praised feature across 5 personas

- Population, emissions, funding in one place

Decision Tracker

- Searchable governance database — best in class

- Valued by govts, MDBs, donors, CSOs, researchers

Knowledge Hub

- 13 publication types, multi-faceted search

- Tiered: full report → brief → article

Co-financing Data

- 1:10 ratio is CIF’s most powerful proof point

- Clear donut charts with data timestamps

Governance Transparency

- Meeting archives back to 2016

- Exceeds most multilateral fund peers

Visual Credibility

- Clean institutional aesthetic, strong brand

- DGM pages + ChangeMakers prove accessible writing works

What users find problematic

No audience entry points

- All 9 personas land on the same homepage

- No “For Governments” / “For Communities” routing

Showcase over utility

- Site is a brochure first, a working tool second

- Homepage helps no one do their actual job

Results are aggregate-only

- 42.7M tons CO2 — no country/program breakdown

- “87 of 173 reporting” buried in footnotes

Nav follows the org chart

- M&R Toolkits hidden under “Learning Laboratory”

- One workflow touches 5 of 7 nav sections

Misallocated real estate

- Capital Markets gets a full nav slot

- DGM (only direct grants) buried 2 levels deep

No current calls page

- Funding info scattered across news, governance, KB

- Contact page: 3 generic emails for 9 audiences

It’s about usability and clear paths to data

The site looks good. The content is rich. The problem is structural — it’s not organized around how people actually find and use what they need.

Listen first

Hear from the team. What’s been tried, what worked, what didn’t, what constraints shaped the current site. Enter the history — don’t assume a blank slate.

Map the user journeys

Follow each persona’s actual path through the site. Where do they get lost? Where do they give up? The data is in the analytics — supplement it with the persona research.

Focus on the architecture

If a user can’t find what they need in three clicks, the structure isn’t working. Strip back to the information architecture and make every path to data clear and direct.

Beautiful and usable are not in tension. But usable has to come first.

Making CIF.org the source AI engines quote

When someone asks ChatGPT “What are the largest multilateral climate funds?” — CIF.org should be cited. Structural gaps prevent this today.

Drop in traditional search volume by 2026 (Gartner)

Surge in AI referral traffic, 2025 holiday season (Adobe)

Sources cited per AI answer. You must be one of them.

Visibility boost from GEO techniques (Princeton/Georgia Tech, KDD 2024)

Current Quick Links — no structured data for AI crawlers

How LLMs decide what’s worth quoting

Beyond metadata and schema, there’s a fundamental principle: LLMs’ attention mechanisms latch onto specific, distinctive, quotable facts. If CIF’s pages read like generic brochure copy, AI engines will skip them for a source that offers concrete claims.

What AI engines ignore

“CIF supports ambitious climate action in developing countries through its portfolio of programs.” — This is interchangeable with any fund’s about page. No attention head fires. Nothing to cite.

What AI engines quote

“CIF has deployed $12.5B across 81 countries, leveraging $73B in co-financing at a 1:10 ratio — making it the world’s largest multilateral climate finance mechanism by leverage.” — Specific. Unique. Citable.

Lead with numbers

Every program page should open with its specific stats: dollars deployed, countries reached, tons mitigated.

Make claims comparative

“Largest,” “first,” “only” — superlatives give LLMs confidence that this is the primary source. CIF has many legitimate firsts.

Front-load each page

44% of AI citations come from the first 30% of a page. The most important facts must appear in the first two paragraphs, not in a PDF.

Fix metadata & add structured data

CIF.org has zero JSON-LD structured data. The homepage title reads “CIF” — not “Climate Investment Funds.” AI engines can’t identify the entity.

- Fix title: “Climate Investment Funds (CIF) | Accelerating Climate Action in Developing Countries”

- Add Organization schema with founding date, World Bank relationship, mission

- Add Article/Report schema to 400+ publications with author, date, dateModified

- Add FAQPage schema answering “How does CTF work?” on every program page

- Deploy llms.txt at site root mapping key resources

Pages with 3+ schema types are ~13% more likely to be cited in AI answers.

<script type="application/ld+json">

{ "@type": "Organization",

"name": "Climate Investment Funds",

"alternateName": "CIF",

"parentOrganization": "World Bank"

}

</script>

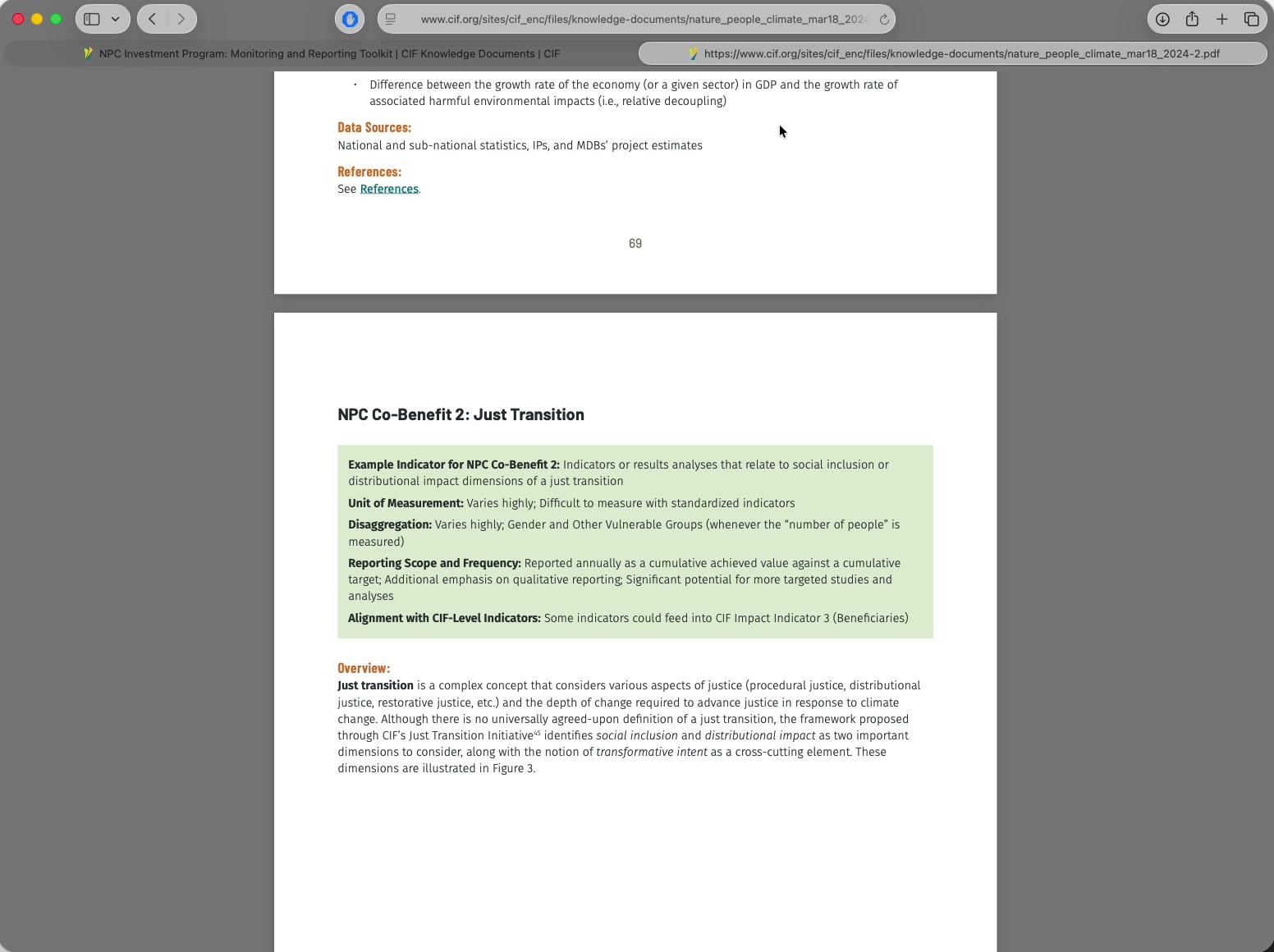

Convert PDFs to citable HTML & build topic clusters

CIF’s most valuable content — evaluations, investment plans — is locked in PDFs. AI crawlers can’t index them.

- HTML landing pages for top 50 PDFs with executive summaries and extractable data

- Build topical authority clusters: “Climate Finance Mechanisms” pillar linking to program sub-pages

- Add 2–4 sentence “answer capsules” under every H2 heading

- Reduce navigation markup bloat — lazy-load faceted filters

Queries CIF should own

“What is the Clean Technology Fund?” · “Largest multilateral climate funds” · “How does concessional climate finance work?” · “Coal transition financing”

Build cross-platform authority so AI engines trust CIF

The strongest predictor of AI citations is brand search volume (0.334 correlation), not backlinks. 90% of AI citations come from earned & owned media.

- Audit & enrich Wikipedia articles about CIF — ChatGPT’s #1 source (7.8% of citations)

- Engage on social media like Reddit climate finance discussions — top source for AI Overviews & Perplexity

- Full metadata + transcripts + chapters on all YouTube content

- Data-rich LinkedIn posts (29.4K followers) AI engines can reference

- Create a definitive “About CIF” page — single source of truth for AI extraction

- Allow AI search bots while blocking training bots in robots.txt

AI-referred visitors convert at 4.4x the rate of organic search visitors.

The opportunity

CIF has unique, authoritative, data-rich climate finance content no other source can replicate. The challenge is purely structural — making it discoverable, extractable, and citable.

Where to start

First Month

- Maintain continuity with whoever I’m replacing or augmenting

- Learn the team’s needs, workflows, and constraints

- Audit what’s in the back catalog — what can AI extract from existing PDFs, reports, and data?

First Quarter

- Map how different audiences actually use the site

- Write initial data-heavy specs for the vendor — metadata, structured data, machine-readable content

- Audit the broader ecosystem — Wikipedia, social media, other sites — for GEO impact

First Year

- A GEO/LLM-friendly site with structured, citable content

- Clearer paths to relevant information for different user types

- Cross-platform authority built with the comms team

Michael Douma

Science & Literacy

25 years in science, art, and cultural literacy. Time.gov at NIST, WebExhibits (350M views), SpicyNodes (40M users).

Games & AI Systems

A decade in games and large-scale AI. NSF-funded semantic database, 100M+ relationships, 130M AI operations. Founder experience.

Data Visualization

Deep interest in making complex data usable, browsable, and understandable for non-specialist audiences.

Climate

Personally interested in climate impacts, and the real incentives that tip the needle for reducing destruction and helping people adapt.

Why I’m Here

I want to work in climate and development. AI and data skills are my way in.

Communications Digital Officer · ETC2 · Req 35105

An NSF-Funded Project I Founded

An NSF-Funded Project I Founded

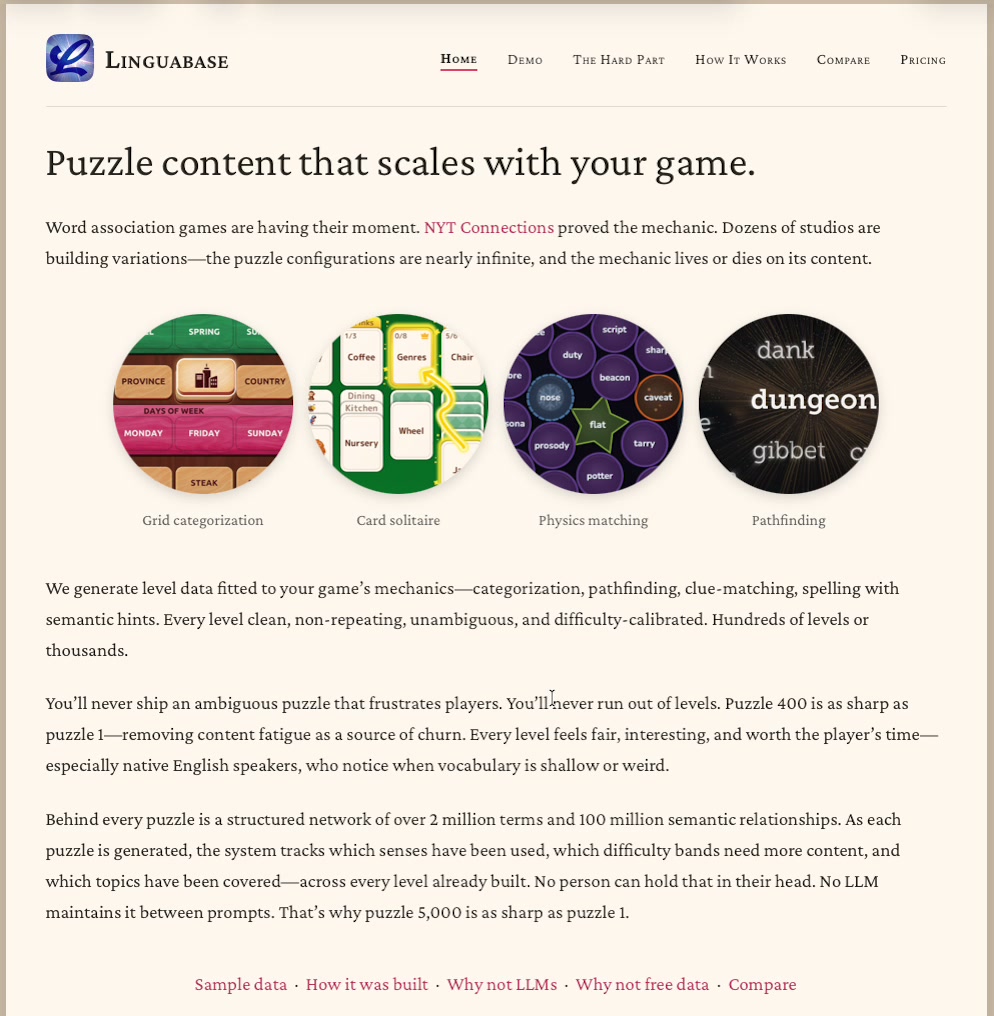

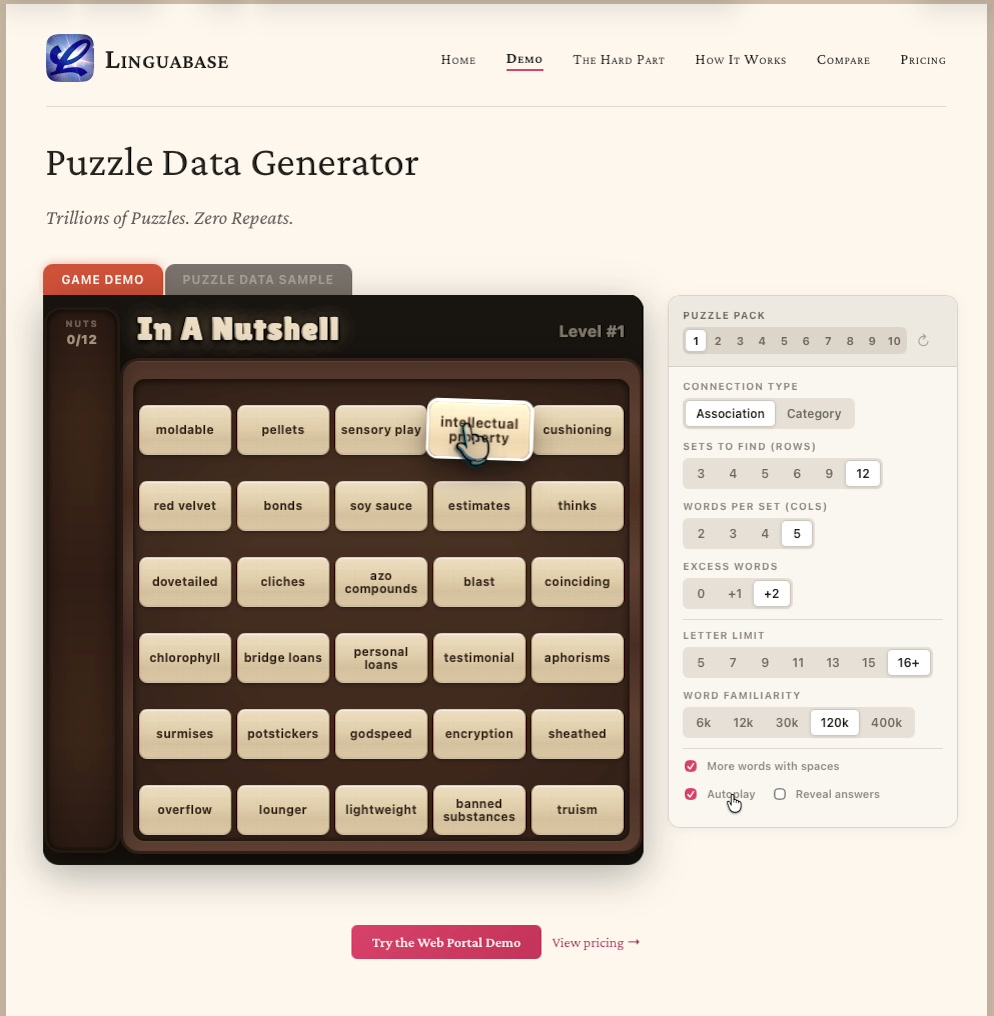

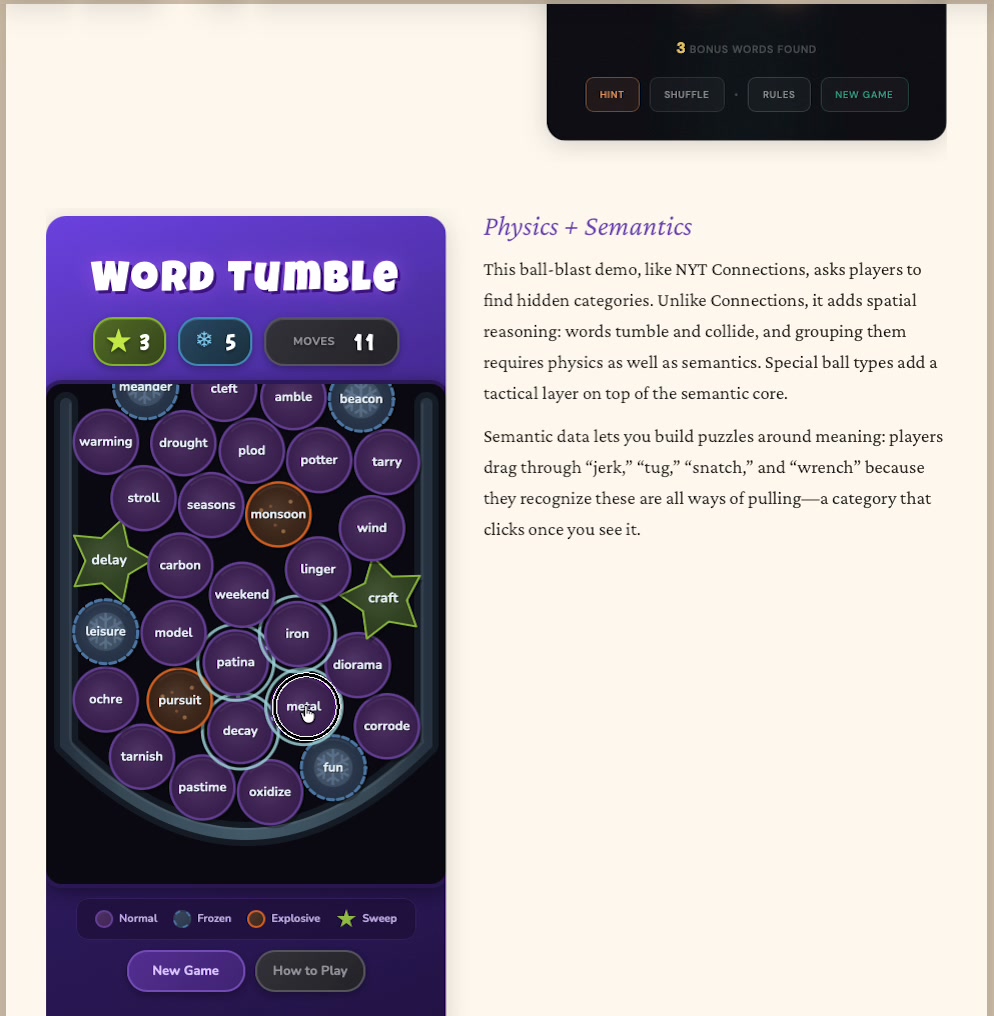

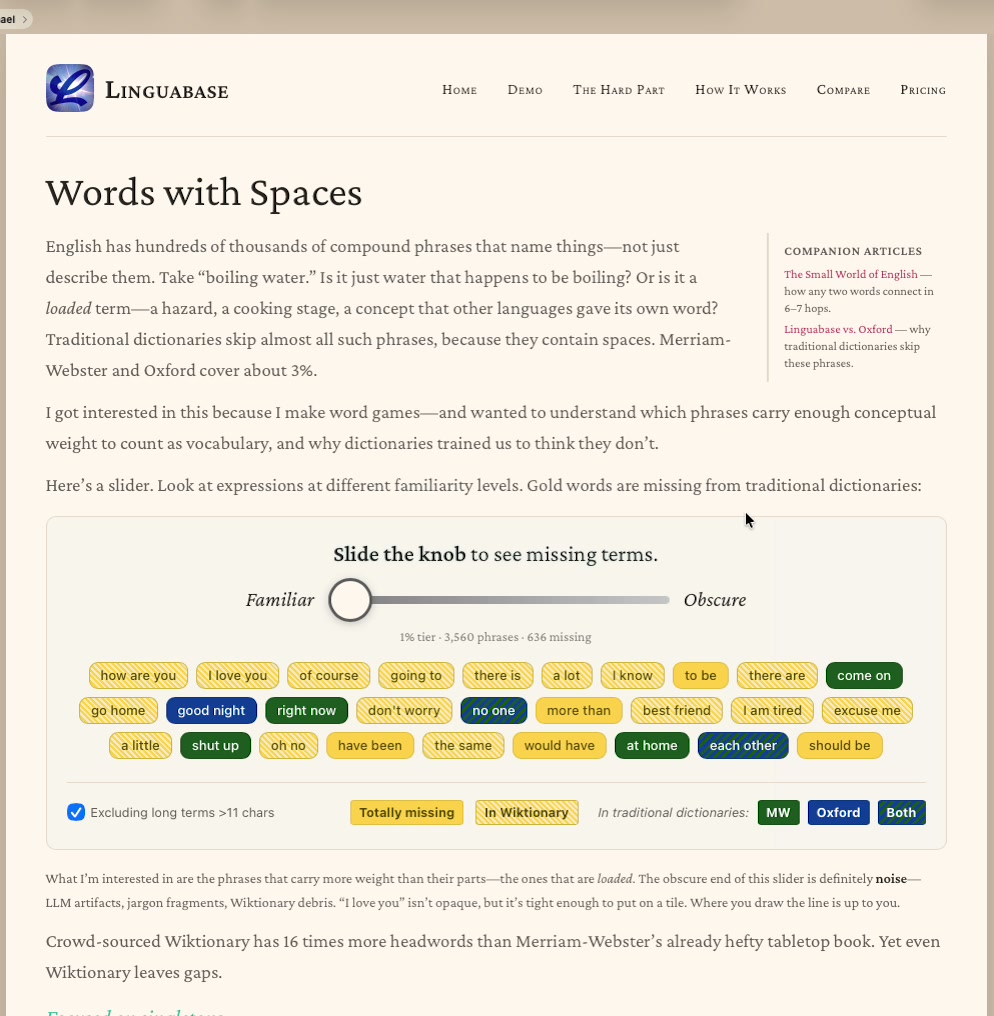

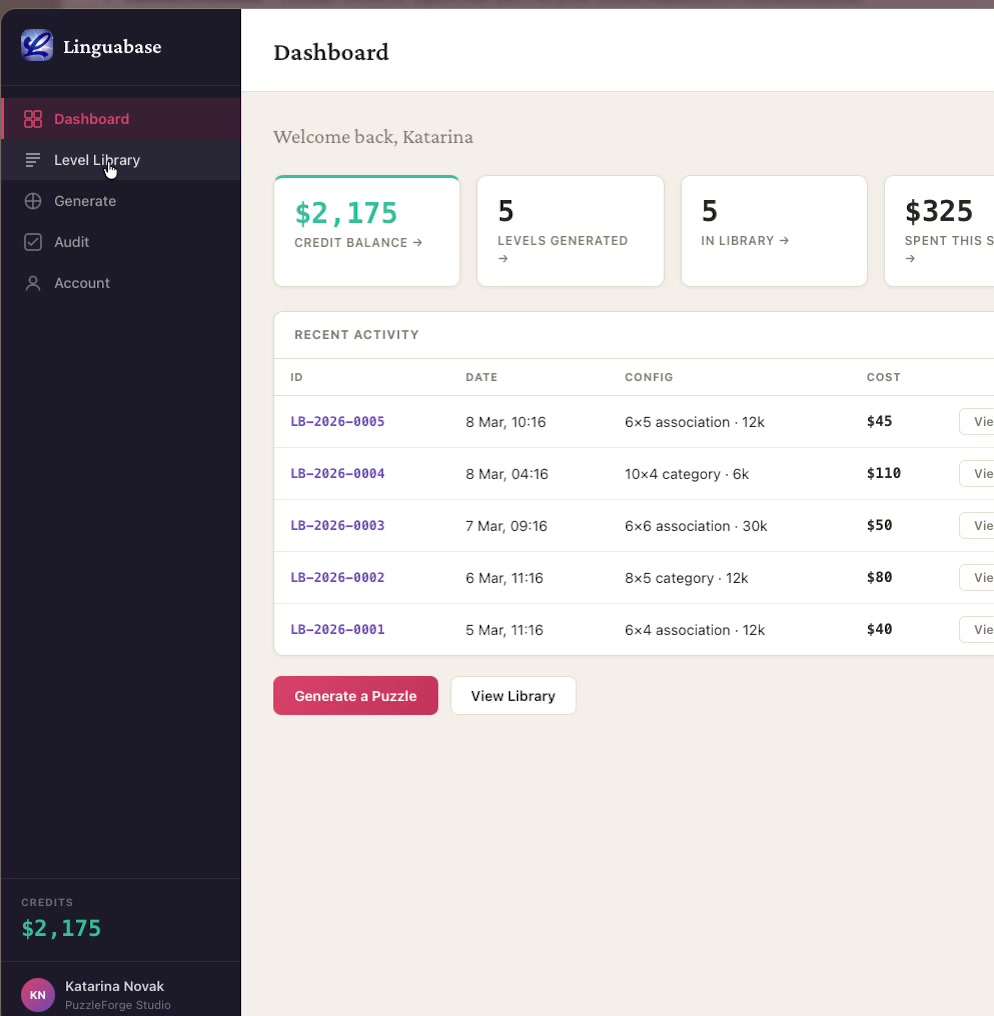

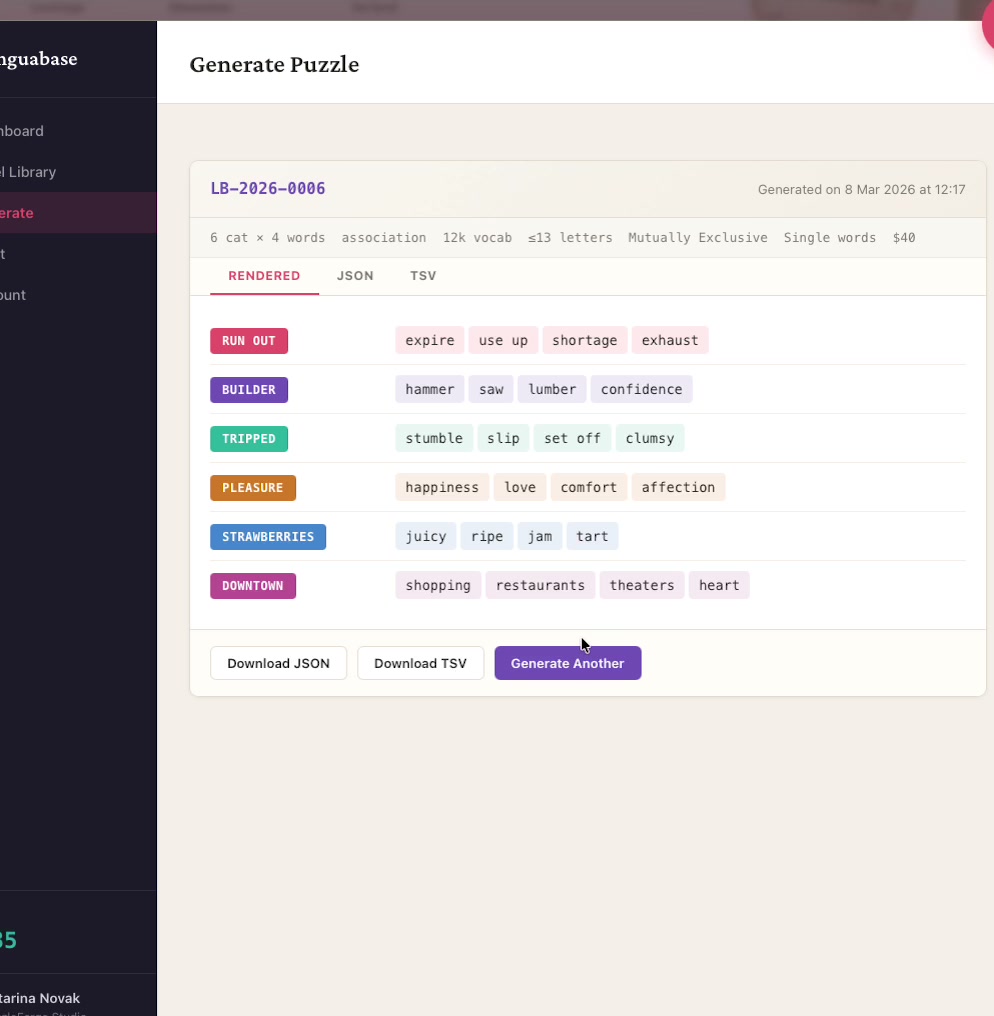

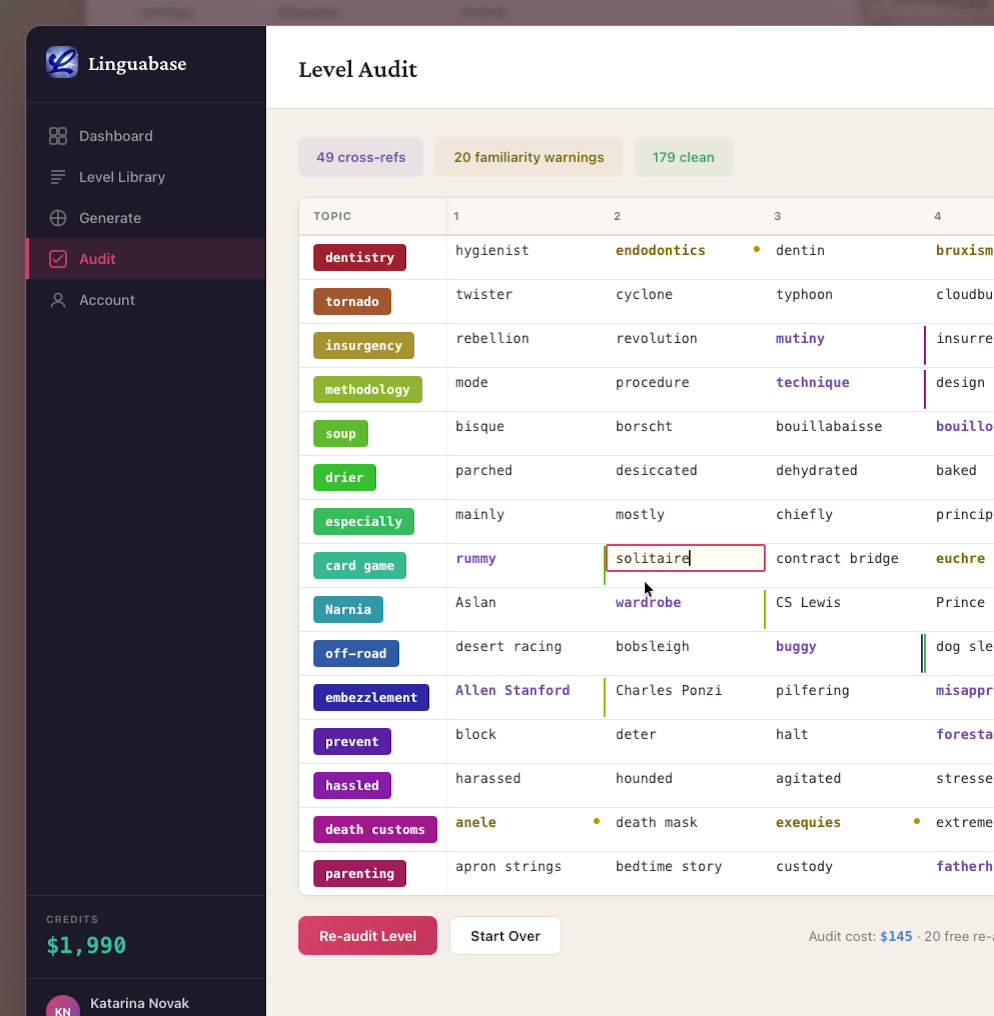

Linguabase: the world’s largest word–game data system

A structured graph of 400K words and 100M+ semantic connections, built over a decade with NSF supercomputer time, 70+ reference sources, and 130 million AI validation calls. Puzzle content for word games — clean, non-repeating, difficulty-calibrated, at any scale.

This is the anchor of the AI skills I’d bring to CIF — a decade of working at the intersection of structured data, language, and machine intelligence.

The entire website — built with agentic AI tools

Three weeks ago this site didn’t exist. Interactive games, data visualizations, a client portal — hand-coded, no frameworks, no build step. This is what’s possible now.

linguabase.org — static HTML + CSS + vanilla JS · GA4 · responsive · no frameworks